- First and third quartile

- Contingency table

- QQ-plot

- Advanced descriptive statistics

{summarytools}package

- Versatil Markdown 2 0 50 Percent 30

- Versatil Markdown 2 0 50 Percent Percentage

- Versatil Markdown 2 0 50 Percent Calculator

- Versatil Markdown 2 0 50 Percent Auto Financing

This article explains how to compute the main descriptive statistics in R and how to present them graphically. To learn more about the reasoning behind each descriptive statistics, how to compute them by hand and how to interpret them, read the article 'Descriptive statistics by hand'.

To briefly recap what have been said in that article, descriptive statistics (in the broad sense of the term) is a branch of statistics aiming at summarizing, describing and presenting a series of values or a dataset. Descriptive statistics is often the first step and an important part in any statistical analysis. It allows to check the quality of the data and it helps to 'understand' the data by having a clear overview of it. If well presented, descriptive statistics is already a good starting point for further analyses. There exists many measures to summarize a dataset. They are divided into two types:

- location measures and

- dispersion measures

Versatil Markdown 2 0 50 Percent 30

Free shipping and returns on Red New Markdowns at Nordstrom.com.

Location measures give an understanding about the central tendency of the data, whereas dispersion measures give an understanding about the spread of the data. In this article, we focus only on the implementation in R of the most common descriptive statistics and their visualizations (when deemed appropriate). See online or in the above mentioned article for more information about the purpose and usage of each measure.

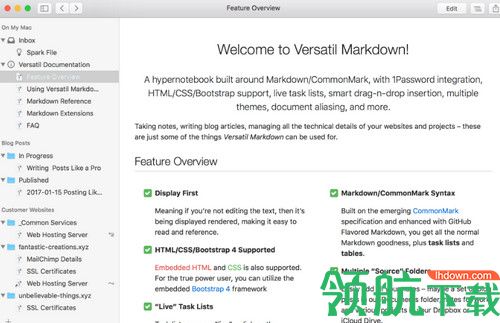

0 Comments File size: 28 MB Versatil Markdown is a hypernotebook built around Markdown/CommonMark, with 1Password integration, HTML/CSS support, syntax highlighting, frictionless keyboard flow, smart drag-n-drop insertion, multiple themes, document aliasing, and more. Calculating the Percent Value of Whole Number Amounts and All Percents (514 views this week) Calculating the Original Amount with Whole Numbers and All Percents (385 views this week) Percentage Increase or Decrease of Whole Numbers with 1 Percent Intervals (269 views this week) Calculating the Percent Value of Whole Number Currency Amounts and Select Percents (217 views this week) Mixed. ECCO Omni Vent Air Gore-Tex® Waterproof Sneaker (Men) Was: $199.95. Now: $119.90 40% off. $149.98 – $189.99 Up to 50% off selected colors/sizes (5) Free Delivery. ECCO Soft VII Lace-Up Sneaker (Men) $159.95 (176) Free Delivery. ECCO Collin 2.0 CVO Low Top Sneaker (Men) $149.95 (1) Free Delivery.

We use the dataset iris throughout the article. This dataset is imported by default in R, you only need to load it by running iris:

Below a preview of this dataset and its structure:

The dataset contains 150 observations and 5 variables, representing the length and width of the sepal and petal and the species of 150 flowers. Length and width of the sepal and petal are numeric variables and the species is a factor with 3 levels (indicated by num and Factor w/ 3 levels after the name of the variables). See the different variables types in R if you need a refresh.

Regarding plots, we present the default graphs and the graphs from the well-known {ggplot2} package. Graphs from the {ggplot2} package usually have a better look but it requires more advanced coding skills (see the article 'Graphics in R with ggplot2' to learn more). If you need to publish or share your graphs, I suggest using {ggplot2} if you can, otherwise the default graphics will do the job.

Tip: I recently discovered the ggplot2 builder from the {esquisse} addins. See how you can easily draw graphs from the {ggplot2} package without having to code it yourself.

All plots displayed in this article can be customized. For instance, it is possible to edit the title, x and y-axis labels, color, etc. However, customizing plots is beyond the scope of this article so all plots are presented without any customization. Interested readers will find numerous resources online.

Minimum and maximum can be found thanks to the min() and max() functions:

Alternatively the range() function:

gives you the minimum and maximum directly. Note that the output of the range() function is actually an object containing the minimum and maximum (in that order). This means you can actually access the minimum with:

and the maximum with:

This reminds us that, in R, there are often several ways to arrive at the same result. The method that uses the shortest piece of code is usually preferred as a shorter piece of code is less prone to coding errors and more readable.

The range can then be easily computed, as you have guessed, by subtracting the minimum from the maximum:

To my knowledge, there is no default function to compute the range. However, if you are familiar with writing functions in R, you can create your own function to compute the range:

which is equivalent than (max - min) presented above.

The mean can be computed with the mean() function:

Tips:

- if there is at least one missing value in your dataset, use

mean(dat$Sepal.Length, na.rm = TRUE)to compute the mean with the NA excluded. This argument can be used for most functions presented in this article, not only the mean - for a truncated mean, use

mean(dat$Sepal.Length, trim = 0.10)and change thetrimargument to your needs

The median can be computed thanks to the median() function:

or with the quantile() function:

since the quantile of order 0.5 ((q_{0.5})) corresponds to the median.

As the median, the first and third quartiles can be computed thanks to the quantile() function and by setting the second argument to 0.25 or 0.75:

You may have seen that the results above are slightly different than the results you would have found if you compute the first and third quartiles by hand. It is normal, there are many methods to compute them (R actually has 7 methods to compute the quantiles!). However, the methods presented here and in the article 'descriptive statistics by hand' are the easiest and most 'standard' ones. Furthermore, results do not dramatically change between the two methods.

Other quantiles

As you have guessed, any quantile can also be computed with the quantile() function. For instance, the (4^{th}) decile or the (98^{th}) percentile:

The interquartile range (i.e., the difference between the first and third quartile) can be computed with the IQR() function:

or alternatively with the quantile() function again:

As mentioned earlier, when possible it is usually recommended to use the shortest piece of code to arrive at the result. For this reason, the IQR() function is preferred to compute the interquartile range.

The standard deviation and the variance is computed with the sd() and var() functions:

Remember from the article descriptive statistics by hand that the standard deviation and the variance are different whether we compute it for a sample or a population (see the difference between sample and population). In R, the standard deviation and the variance are computed as if the data represent a sample (so the denominator is (n - 1), where (n) is the number of observations). To my knowledge, there is no function by default in R that computes the standard deviation or variance for a population.

Tip: to compute the standard deviation (or variance) of multiple variables at the same time, use lapply() with the appropriate statistics as second argument:

The command dat[, 1:4] selects the variables 1 to 4 as the fifth variable is a qualitative variable and the standard deviation cannot be computed on such type of variable. See a recap of the different data types in R if needed.

You can compute the minimum, (1^{st}) quartile, median, mean, (3^{rd}) quartile and the maximum for all numeric variables of a dataset at once using summary():

Tip: if you need these descriptive statistics by group use the by() function:

where the arguments are the name of the dataset, the grouping variable and the summary function. Follow this order, or specify the name of the arguments if you do not follow this order.

If you need more descriptive statistics, use stat.desc() from the package {pastecs}:

You can have even more statistics (i.e., skewness, kurtosis and normality test) by adding the argument norm = TRUE in the previous function. Note that the variable Species is not numeric, so descriptive statistics cannot be computed for this variable and NA are displayed.

The coefficient of variation can be found with stat.desc() (see the line coef.var in the table above) or by computing manually (remember that the coefficient of variation is the standard deviation divided by the mean):

To my knowledge there is no function to find the mode of a variable. However, we can easily find it thanks to the functions table() and sort():

table() gives the number of occurrences for each unique value, then sort() with the argument decreasing = TRUE displays the number of occurrences from highest to lowest. The mode of the variable Sepal.Length is thus 5. This code to find the mode can also be applied to qualitative variables such as Species:

or:

Another descriptive statistics is the correlation coefficient. A correlation measures the linear relationship between two variables. Btt touch bar.

Computing correlation in R requires a detailed explanation so I wrote an article covering correlation and correlation test.

table() introduced above can also be used on two qualitative variables to create a contingency table. The dataset iris has only one qualitative variable so we create a new qualitative variable just for this example. We create the variable size which corresponds to small if the length of the petal is smaller than the median of all flowers, big otherwise:

Here is a recap of the occurrences by size:

We now create a contingency table of the two variables Species and size with the table() function:

or with the xtabs() function:

The contingency table gives the number of cases in each subgroup. For instance, there is only one big setosa flower, while there are 49 small setosa flowers in the dataset.

To go further, we can see from the table that setosa flowers seem to be larger in size than virginica flowers. In order to check whether size is significantly associated with species, we could perform a Chi-square test of independence since both variables are categorical variables. See how to do this test by hand and in R.

Note that Species are in rows and size in column because we specified Species and then size in table(). Change the order if you want to switch the two variables.

Instead of having the frequencies (i.e. the number of cases) you can also have the relative frequencies (i.e., proportions) in each subgroup by adding the table() function inside the prop.table() function:

Note that you can also compute the percentages by row or by column by adding a second argument to the prop.table() function: 1 for row, or 2 for column:

See the section on advanced descriptive statistics for more advanced contingency tables.

Mosaic plot

A mosaic plot allows to visualize a contingency table of two qualitative variables:

The mosaic plot shows that, for our sample, the proportion of big and small flowers is clearly different between the three species. In particular, the virginica species is the biggest, and the setosa species is the smallest of the three species (in terms of sepal length since the variable size is based on the variable Sepal.Length).

For your information, a mosaic plot can also be done via the mosaic() function from the {vcd} package:

Barplots can only be done on qualitative variables (see the difference with a quantitative variable here). A barplot is a tool to visualize the distribution of a qualitative variable. We draw a barplot of the qualitative variable size:

You can also draw a barplot of the relative frequencies instead of the frequencies by adding prop.table() as we did earlier:

In {ggplot2}:

A histogram gives an idea about the distribution of a quantitative variable. The idea is to break the range of values into intervals and count how many observations fall into each interval. Histograms are a bit similar to barplots, but histograms are used for quantitative variables whereas barplots are used for qualitative variables. To draw a histogram in R, use hist():

Add the arguments breaks = inside the hist() function if you want to change the number of bins. Does directv have an apple tv app. A rule of thumb (known as Sturges' law) is that the number of bins should be the rounded value of the square root of the number of observations. The dataset includes 150 observations so in this case the number of bins can be set to 12. Play superhot online.

In {ggplot2}:

By default, the number of bins is 30. You can change this value with geom_histogram(bins = 12) for instance.

Boxplots are really useful in descriptive statistics and are often underused (mostly because it is not well understood by the public). A boxplot graphically represents the distribution of a quantitative variable by visually displaying five common location summary (minimum, median, first/third quartiles and maximum) and any observation that was classified as a suspected outlier using the interquartile range (IQR) criterion. The IQR criterion means that all observations above (q_{0.75} + 1.5 cdot IQR) or below (q_{0.25} - 1.5 cdot IQR) (where (q_{0.25}) and (q_{0.75}) correspond to first and third quartile respectively) are considered as potential outliers by R. The minimum and maximum in the boxplot are represented without these suspected outliers.

Seeing all these information on the same plot help to have a good first overview of the dispersion and the location of the data. Before drawing a boxplot of our data, see below a graph explaining the information present on a boxplot:

Now an example with our dataset:

Boxplots are even more informative when presented side-by-side for comparing and contrasting distributions from two or more groups. For instance, we compare the length of the sepal across the different species:

In {ggplot2}:

A dotplot is more or less similar than a boxplot, except that observations are represented as points and there is no summary statistics presented on the plot:

Scatterplots allow to check whether there is a potential link between two quantitative variables. For this reason, scatterplots are often used to visualize a potential correlation between two variables. For instance, when drawing a scatterplot of the length of the sepal and the length of the petal:

There seems to be a positive association between the two variables.

In {ggplot2}:

Like boxplots, scatterplots are even more informative when differentiating the points according to a factor, in this case the species:

Line plots, particularly useful in time series or finance, can be created by adding the type = 'l' argument in the plot() function:

For a single variable

In order to check the normality assumption of a variable (normality means that the data follow a normal distribution, also known as a Gaussian distribution), we usually use histograms and/or QQ-plots.1 See an article discussing about the normal distribution and how to evaluate the normality assumption in R if you need a refresh on that subject. Histograms have been presented earlier, so here is how to draw a QQ-plot:

Or a QQ-plot with confidence bands with the qqPlot() function from the {car} package:

If points are close to the reference line (sometimes referred as Henry's line) and within the confidence bands, the normality assumption can be considered as met. The bigger the deviation between the points and the reference line and the more they lie outside the confidence bands, the less likely that the normality condition is met. The variable Sepal.Length does not seem to follow a normal distribution because several points lie outside the confidence bands. When facing a non-normal distribution, the first step is usually to apply the logarithm transformation on the data and recheck to see whether the log-transformed data are normally distributed. Applying the logarithm transformation can be done with the log() function.

In {ggpubr}:

By groups

For some statistical tests, the normality assumption is required in all groups. One solution is to draw a QQ-plot for each group by manually splitting the dataset into different groups and then draw a QQ-plot for each subset of the data (with the methods shown above). Another (easier) solution is to draw a QQ-plot for each group automatically with the argument groups = in the function qqPlot() from the {car} package:

In {ggplot2}:

It is also possible to differentiate groups by only shape or color. For this, remove one of the argument col or shape in the qplot() function above.

Density plot is a smoothed version of the histogram and is used in the same concept, that is, to represent the distribution of a numeric variable. The functions plot() and density() are used together to draw a density plot:

In {ggplot2}:

The last type of descriptive plot is a correlation plot, also called a correlogram. This type of graph is more complex than the ones presented above, so it is detailed in a separate article. See how to draw a correlogram to highlight the most correlated variables in a dataset.

We covered the main functions to compute the most common and basic descriptive statistics. There are, however, many more functions and packages to perform more advanced descriptive statistics in R. In this section, I present some of them with applications to our dataset.

{summarytools} package

One package for descriptive statistics I often use for my projects in R is the {summarytools} package. The package is centered around 4 functions:

freq()for frequencies tablesctable()for cross-tabulationsdescr()for descriptive statisticsdfSummary()for dataframe summaries

A combination of these 4 functions is usually more than enough for most descriptive analyses. Moreover, the package has been built with R Markdown in mind, meaning that outputs render well in HTML reports. And for non-English speakers, built-in translations exist for French, Portuguese, Spanish, Russian and Turkish.

I illustrate each of the 4 functions in the following sections. Outputs that follow display much better in R Markdown reports, but in this article I limit myself to the raw outputs as the goal is to show how the functions work, not how to make them render well. See the setup settings in the vignette of the package if you want to print the outputs in a nice way in R Markdown.2

Frequency tables with freq()

The freq() function produces frequency tables with frequencies, proportions, as well as missing data information.

If you do not need information about missing values, add the report.nas = FALSE argument:

And for a minimalist output with only counts and proportions:

Cross-tabulations with ctable()

The ctable() function produces cross-tabulations (also known as contingency tables) for pairs of categorical variables. Using the two categorical variables in our dataset:

Row proportions are shown by default. To display column or total proportions, add the prop = 'c' or prop = 't' arguments, respectively:

To remove proportions altogether, add the argument prop = 'n'. Furthermore, to display only the bare minimum, add the totals = FALSE and headings = FALSE arguments:

This is equivalent than table(dat$Species, dat$size) and xtabs(~ dat$Species + dat$size) performed in the section on contingency tables.

To display results of the Chi-square test of independence, add the chisq = TRUE argument:3

The p-value is close to 0 so we reject the null hypothesis of independence between the two variables. In our context, this indicates that species and size are dependent and that there is a significant relationship between the two variables.

It is also possible to create a contingency table for each level of a third categorical variable thanks to the combination of the stby() and ctable() functions. Instant 3 1 download free. There are only 2 categorical variables in our dataset, so let's use the tabacco dataset which has 4 categorical variables (i.e., gender, age group, smoker, diseased). For this example, we would like to create a contingency table of the variables smoker and diseased, and this for each gender:

Descriptive statistics with descr()

The descr() function produces descriptive (univariate) statistics with common central tendency statistics and measures of dispersion. (See the difference between a measure of central tendency and dispersion if you need a reminder.)

A major advantage of this function is that it accepts single vectors as well as data frames. If a data frame is provided, all non-numerical columns are ignored so you do not have to remove them yourself before running the function.

The descr() function allows to display:

- only a selection of descriptive statistics of your choice, with the

stats = c('mean', 'sd')argument for mean and standard deviation for example - the minimum, first quartile, median, third quartile and maximum with

stats = 'fivenum' - the most common descriptive statistics (mean, standard deviation, minimum, median, maximum, number and percentage of valid observations), with

stats = 'common':

Tip: if you have a large number of variables, add the transpose = TRUE argument for a better display.

In order to compute these descriptive statistics by group (e.g., Species in our dataset), use the descr() function in combination with the stby() function:

Data frame summaries with dfSummary()

The dfSummary() function generates a summary table with statistics, frequencies and graphs for all variables in a dataset. The information shown depends on the type of the variables (character, factor, numeric, date) and also varies according to the number of distinct values.

describeBy() from the {psych} package

The describeBy() function from the {psych} package allows to report several summary statistics (i.e., number of valid cases, mean, standard deviation, median, trimmed mean, mad: median absolute deviation (from the median), minimum, maximum, range, skewness and kurtosis) by a grouping variable.

aggregate() function

The aggregate() function allows to split the data into subsets and then to compute summary statistics for each. For instance, if we want to compute the mean for the variables Sepal.Length and Sepal.Width by Species and Size:

Thanks for reading. I hope this article helped you to do descriptive statistics in R. If you would like to do the same by hand or understand what these statistics represent, I invite you to read the article 'Descriptive statistics by hand'.

As always, if you have a question or a suggestion related to the topic covered in this article, please add it as a comment so other readers can benefit from the discussion.

Normality tests such as Shapiro-Wilk or Kolmogorov-Smirnov tests can also be used to test whether the data follow a normal distribution or not. However, in practice, normality tests are often considered as too conservative in the sense that for large sample size, a small deviation from the normality may cause the normality condition to be violated. For this reason, it is often the case that the normality condition is verified based on a combination of visual inspections (with histograms and QQ-plots) and formal test (Shapiro-Wilk test for instance).↩︎

Note that the

plain.asciiandstylearguments are needed for this package. In our examples, these arguments are added in the settings of each chunk so they are not visible.↩︎Note that it is also possible to compute odds ratio and risk ratio. See the vignette of the package for more information on this matter as these ratios are beyond the scope of this article.↩︎

Related articles

Liked this post?

Get updates every time a new article is published.No spam and unsubscribe anytime.

- Percentage Increase & Decrease

- Finding the final amount given the original amount and a percentage increase or decrease

- Finding the sale price given the original price and percent discount

- Finding the sale price without a calculator given the original price and percent discount

- Finding the total cost including tax or markup

- Finding the original amount given the result of a percentage increase or decrease

- Finding the original price given the sale price and percent discount

- Comparing discounts

- Selected Reading

Introduction

Mcafee security v1 1 intel download free. In this lesson, we learn how to find the original price, given the sale price and percent discount.

Rules to find the original price given the sale price and percent discount

First consider the unknown original price as ‘x'.

Then consider the rate of discount.

To find the actual discount, multiply the discount rate by the original amount ‘x'.

To find the sale price, subtract the actual discount from the original amount ‘x' and equate this to given sale price.

https://ojlb.over-blog.com/2020/12/tenorshare-icarefone-6-0-0-168.html. Solve the equation and find the original amount ‘x'.

A desk is being sold at a 36% discount. The sale price is $496. What was its original price?

Solution

Step 1:

Let the original price be = x

Discount rate = 36%

Step 2:

Discount = 36% of x = 0.36 × x = 0.36x

Sale price = Original price − Discount = x − 0.36x = 0.64x

Step 3:

Sale price = $496 = 0.64x

Solving for x

x = $frac{496}{0.64} =$ $775

So, original price = $775

Example 2:If a Play Station was bought for $558 after a 10% discount, what was the original price of the Play Station?

Solution

Step 1:

Let the original price be = x

Discount rate = 10%

Step 2:

Discount = 10% of x = 0.10 × x = 0.1x

Versatil Markdown 2 0 50 Percent Percentage

Sale price = Original price − Discount = x − 0.1x = 0.9x

Step 3:

Sale price = $558 = 0.9x

Solving for x

Versatil Markdown 2 0 50 Percent Calculator

x = $frac{558}{0.9} =$ $620

Versatil Markdown 2 0 50 Percent Auto Financing

So, original price = $620